In this article, let’s build a multi-container full stack application deployed through Docker Compose. We’ll develop an Angular application that consumes data from an ASP.NET Core (.NET 6) Web API, all while interacting from within their respective containers. We’ll orchestrate the deployment and execution of this entire stack through Docker Compose.

What is Docker Compose?

Docker Compose is a configuration file which contains instructions for the Docker about how services should be built from respective Dockerfiles.

This facilitates developers in creating multi-component application stacks which might contain front-end, back-end, caches, databases and so on. It helps ease these component interactions, by taking care of all the internal routing and translations for us.

While a Dockerfile aims at creating and customizing application containers by means of base images and instructions, the Docker Compose file works on top of the Dockerfile and helps developers in running docker containers with complex runtime specifications such as ports, volumes and so on.

Building a Multi-Component Application Stack through Docker Compose:

To demonstrate how this actually works, let’s build a full stack application with a front-end and a back-end. The front-end is an Angular application which calls an ASP.NET Core (.NET 6) API to fetch data and render it on a grid.

The front-end and the back-end components exist as individual decoupled applications, and are deployed as separate containers. Each component runs independently in its own isolated environment.

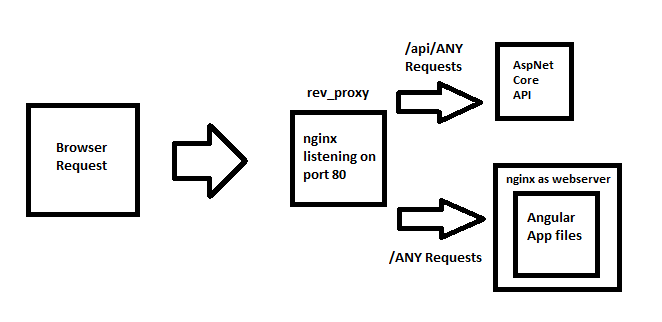

How do the requests pass between the components? Enter NGINX.

The NGINX webserver works as a reverse-proxy for routing the requests between front-end and back-end. This NGINX component also works as an interface to the outside network and routes requests coming to it to respective components based on the request path.

So when a user navigates through the pages in the front-end application, internally the requests are routed by this NGINX proxy.

Designing the Routing Flow

NGINX can be configured to proxy the requests based on their request path structure. For example, we can configure the proxy to route all the requests which start with ‘/api’ to the back-end component which is the ASP.NET Core (.NET 6) Web API that returns data.

We can default the handler to the front-end component, meaning that if the request path doesn’t contain a prefix /api, the NGINX component assumes it as a request to the Angular component and routes it to the Angular component.

Configuring the Proxy

To create the routing specifications in the NGINX server as mentioned above, we make use of a route configuration file called as default.conf which contains the routes and how NGINX needs to behave for each route. The conf file shall be as below:

upstream fe {

server client;

}

upstream be {

server api;

}

server {

listen 80;

location / {

proxy_pass http://fe;

}

location /api {

proxy_pass http://be;

}

}

Observe that for paths “/” and “/api” represented by the “location” configuration, we specify proxy forward to domains “http://fe” and “http://be” which represent the Front-End and Back-End components respectively.

We shall understand why we specify the labels “fe” and “be” instead of domain names for the components and how they’re resolved as we progress.

To create the setup, let’s begin by creating Dockerfile for each of these three components which represent on how the containers are to be built.

Configuring NGINX Proxy component

The NGINX component setup is a simple and straight-forward affair, where we pull the base NGINX image and copy the route specification configuration onto a specific path under the NGINX directory.

NGINX comes with a default configuration setting file (default.conf) which is present in the directory /etc/nginx/conf.d. We replace this settings file with our settings. NGINX uses this settings we have overwritten while handling requests.

This component forms the “first point of connect” for our users. I mean whatever users interact with the application, all requests start from here.

FROM nginx

COPY ["./conf/default.conf","/etc/nginx/conf.d/default.conf"]

Configuring Angular component

The Angular application calls for an API endpoint for data which is rendered on a grid. The API to be used for this functionality is configured under a ts class as below:

export class ApiConstants {

public static uri: string = "/api";

}

Why have we NOT specified any domain while calling for the API? Since this is an Angular application which generally runs on the client browser, the logic runs outside the container which means that any Docker related configuration can’t get resolved at it point.

The NGINX component solves this issue, by routing all incoming requests to either Angular component or the API component respectively for the given path prefix.

Although the browser makes API call to its own domain, it is further routed internally by the NGINX so that the user can never know where the API request actually goes into.

To create the Dockerfile, We follow the same steps on how we can create and deploy an Angular application via Docker that we saw before.

The process requires us to first build the Angular application for release and then copy the generated binaries (the index.html and the js files) onto a webserver which hosts these static files.

We make use of another NGINX instance (not to confuse with the proxy) to host the Angular component files and listen on a specific port – port 80 by default.

The Dockerfile looks like below:

FROM node:14-alpine as build

WORKDIR /app

RUN npm install -g @angular/cli

COPY ./package.json .

RUN npm install

COPY . .

RUN npm run build

FROM nginx as runtime

COPY --from=build /app/dist/client /usr/share/nginx/html

Configuring API component

The API component houses an ASP.NET Core Web API which returns data to the Angular component. To create the Dockerfile, We use the same approach on how we can create and deploy an AspNetCore application via Docker that we used before.

The process requires us to first build the solution for release within a Docker container that provides the SDK and then copy the generated binaries (the dll files) onto another ASP.NET Core container which contains the runtime to run these dlls.

The Dockerfile for the ASP.NET Core API looks as below:

FROM mcr.microsoft.com/dotnet/aspnet:6.0 AS base

WORKDIR /app

FROM mcr.microsoft.com/dotnet/sdk:6.0 AS build

WORKDIR /src

# copy all the layers' csproj files into respective folders

COPY ["./ContainerNinja.Contracts/ContainerNinja.Contracts.csproj", "src/ContainerNinja.Contracts/"]

COPY ["./ContainerNinja.Migrations/ContainerNinja.Migrations.csproj", "src/ContainerNinja.Migrations/"]

COPY ["./ContainerNinja.Infrastructure/ContainerNinja.Infrastructure.csproj", "src/ContainerNinja.Infrastructure/"]

COPY ["./ContainerNinja.Core/ContainerNinja.Core.csproj", "src/ContainerNinja.Core/"]

COPY ["./ContainerNinja.API/ContainerNinja.API.csproj", "src/ContainerNinja.API/"]

# run restore over API project - this pulls restore over the dependent projects as well

RUN dotnet restore "src/ContainerNinja.API/ContainerNinja.API.csproj"

COPY . .

# run build over the API project

WORKDIR "/src/ContainerNinja.API/"

RUN dotnet build -c Release -o /app/build

# run publish over the API project

FROM build AS publish

RUN dotnet publish -c Release -o /app/publish

FROM base AS runtime

WORKDIR /app

COPY --from=publish /app/publish .

RUN ls -l

ENTRYPOINT [ "dotnet", "ContainerNinja.API.dll" ]

Setting up Docker Compose for orchestration

The Docker Compose configures and boots up each of these components (Front-End built on Angular, Back-End which is ASP.NET Core API and NGINX that proxies) together. We tag each of these components with a specific “service” name, which uniquely identifies each component.

The yaml file looks like below:

version: "3"

services:

proxy:

build:

context: ./Proxy

dockerfile: Dockerfile

ports:

- "80:80"

restart: always

client:

build:

context: ./Client

dockerfile: Dockerfile

ports:

- "9000:80"

api:

build:

context: ./API

dockerfile: Dockerfile

ports:

- "5000:80"

How routing works?

One major advantage of using Docker Compose to boot up multiple services is that Docker creates and groups all the services configured under the docker-compose file into a single subnet and takes care of data communication among these services by means of their “service name.

Observe the service names specified for each component to be booted up inside the docker-compose file. The conf file we have configured inside our NGINX component specified the domains for ANGULAR and ASP.NET Core components as:

upstream fe {

server client;

}

upstream be {

server api;

}

It is exactly the same as the service names for these components respectively: client for Angular and api for ASP.NET Core API.

For every request that the NGINX proxy receives from the outside world, the request is routed to any of these two components as http://api/api/endpoint or http://client/index.html

Docker looks up under its own “route mapping table” for a matching component name for the service name specified in the route. If any component name matches, Docker resolves the service name to the IP of that component and routes to it.

Simply put, Docker Compose groups up all the services together and is responsible for maintaining the mapping table.

Running the Setup and Testing the Stack

To run this setup, we give the command:

> docker-compose up

Which builds and deploys every component specified within its configuration file aka the docker-compose.yaml file and groups all of them into a single subnet.

“Ensure that the ports specified in the default.conf for proxy_pass MUST match the ports under which the services run inside their Docker containers.”

##

Recreating containerninjacleanarchitecture_api_1 ... done

Recreating containerninjacleanarchitecture_proxy_1 ... done

Recreating containerninjacleanarchitecture_client_1 ... done

Attaching to containerninjacleanarchitecture_client_1, containerninjacleanarchitecture_api_1, containerninjacleanarchitecture_proxy_1

... application logs start from here ...

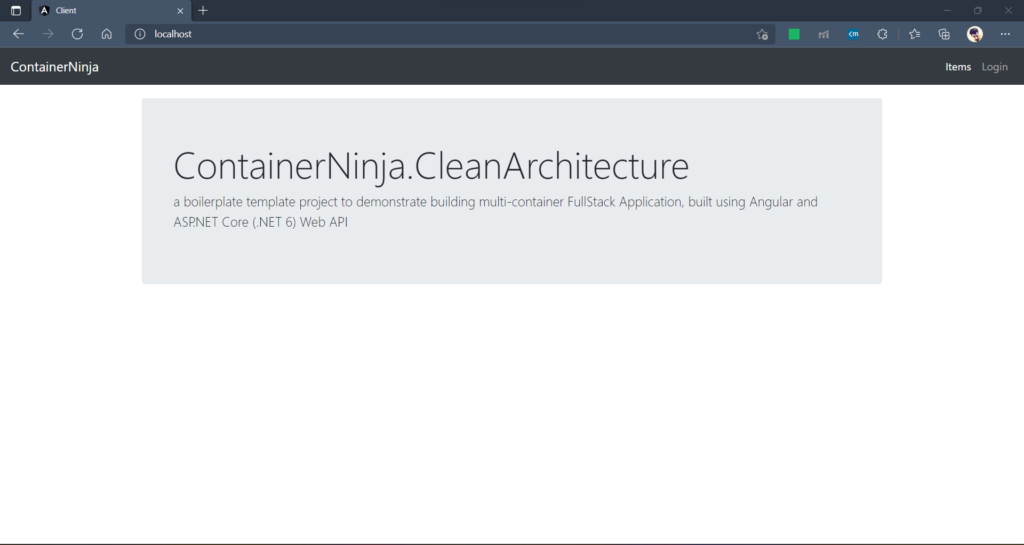

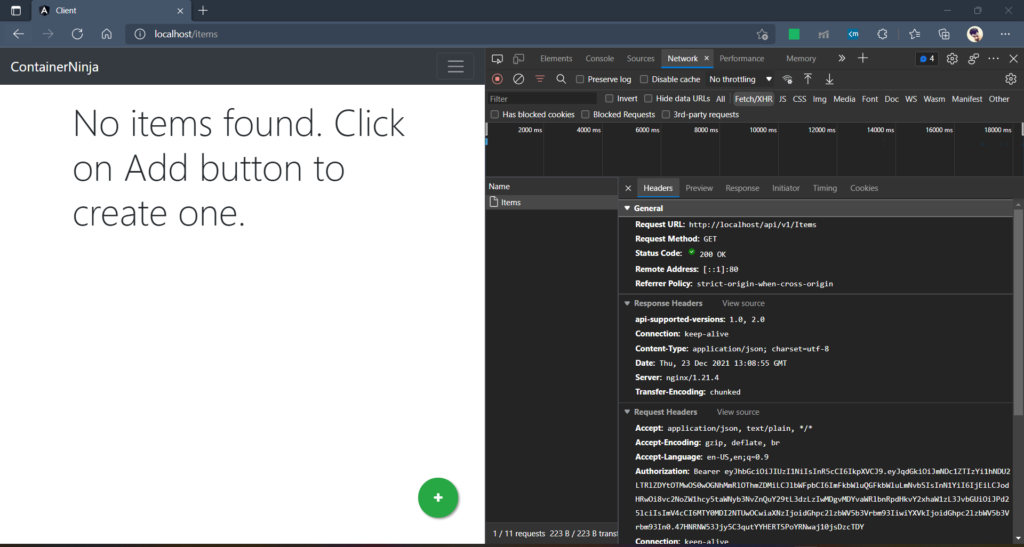

Once the containers are up, browse to http://localhost:80 which the NGINX proxy component listens to.

The NGINX component further routes the request to Angular component http://client:80 and renders the Front-End.

When we try navigating to the Items, the client calls for the API, which internally is routed via NGINX proxy into ASP.NET Core component and data is returned.

Found this article helpful? Please consider supporting!

Conclusion and… boilerplate 🥳

In the world of Containerized application design and development, Docker Compose makes life easier while building and deploying full-stack applications. The orchestration and container route mapping features help easier container interactions, so that developers don’t need to worry much and integrate with more components with ease.

The code snippets used in this article are a part of ContainerNinja.CleanArchitecture boilerplate project, which has been released recently. The solutions aims at demonstrating building container applications, while following Clean Architecture. Do check it out and leave a star if you find the repository useful.

ContainerNinja.CleanArchitecture – GitHub Repository