A cache is a high-speed memory space generally used by applications to store and retrieve frequently read but less-frequently updated data. This technique is widely used by applications to reduce recursive database reads for frequently read data thereby improving performance and reducing database load and costs. While the default In-Memory caching approach is sufficient for smaller application loads, in the scenarios of production loads with need for better efficiency and performance, this may not be effective.

For such application systems, we go to third-party caching providers which are specialized at high-performance and highly efficient caching solutions. There are two such popular caches which are widely used by application developers in the production realm – Redis and Memcached.

In this article, let’s look at how we can configure and use Memcached as a caching layer with an example AspNetCore application.

About Memcached –

Memcached is an open-source, high-performance, distributed memory object caching system which helps in reducing database load. It maintains data as an in-memory key-value store for small chunks of arbitrary data (strings, objects) which can be result of API calls, database reads and so on.

When to use Memcached?

- When caching relatively small and static data, such as HTML code fragments, text.

- Strings are the only data type supported by Memcached

- Ideal for storing data that is only read

Integrating Memcached with ASP.NET Core –

To demonstrate our example, we shall use a local Memcached instance as a caching server. To run a local Memcached server, we make use of the Memcached docker image which reduces our configuration effort drastically. Let’s prepare a docker-compose file which looks like below:

#docker-compose.yaml#

version: "3"

services:

memcached:

image: memcached:alpine

ports:

- 11211:11211

memcached:alpine is the name of the Memcached image which is already available in Docker Hub. When we run this docker-compose file, we have a local Memcached server setup and running on port 11211. To run this docker-compose yaml file, we give the command:

> docker-compose up

To keep things simple, we take our In-Memory cache implementation of the ReaderRepo and add Memcached capabilities to it. We would first need to install Memcached nuget into our application. We make use of the package EnyimMemcachedCore, which is the dotnetcore fork for EnyimMemcached; a popular Memcached client library for .NET framework. To install the Nuget we can either use the command:

> dotnet add package EnyimMemcachedCore --version 2.4.3

or copy paste the below tag into the csproj as:

<PackageReference Include="EnyimMemcachedCore" Version="2.4.3" />

Once this is done, we’ll register Memcached client service and the corresponding middleware onto the application pipeline in the Startup class as:

services.AddEnyimMemcached(memcachedClientOptions => {

memcachedClientOptions.Servers.Add(new Server {

Address = "127.0.0.1", Port = 11211 });

});

Observe that the memcachedClientOptions.Servers is a collection of server nodes, representing the distributed cache system with each server containing an Address and a Port. For our example, we give our local memcached server IP and Port which is 127.0.0.1 (localhost) and 11211 (configured in docker-compose).

Alternatively, we can specify these values in the appsettings and let the service read values from the same as:

services.AddEnyimMemcached(Configuration);

Where the appsettings contains a block “enyimMemcached” that contains similar configuration as:

"enyimMemcached": {

"Servers": [

{

"Address": "localhost",

"Port": 11211

}

]

}

both the approaches work for us. We complete the first step by adding the EnyimMemcached middleware as below:

app.UseEnyimMemcached();

At this point, we have the server configured in our application. We would now make use of this server to write and fetch values from the Memcached instance. We add a new implementation for IReaderRepo that uses Memcached in place of the default MemoryCache as below:

namespace ReadersMvcApp.Providers.Repositories

{

public interface IReaderRepo

{

IQueryable<Reader> Readers { get; }

Reader GetReader(Guid id);

Reader AddReader(Reader reader);

}

public class MemcachedReaderRepo : IReaderRepo

{

private readonly IReaderRepo repo;

private readonly IMemcachedClient cache;

private const int cacheSeconds = 600;

public MemcachedReaderRepo(

IReaderRepo repo,

IMemcachedClient cache)

{

this.repo = repo;

this.cache = cache;

}

public IQueryable<Reader> Readers => this.repo.Readers;

}

}

We would decorate the GetReader() and AddReader() implementations of the ReaderRepo class which is injected into this implementation of IReaderRepo, by virtue of Decorator pattern.

Inside the GetReader() method, we first check if the record is available in the cache, if not present we would pull it from the actual Repository and then add it to the cache before returning it back. This is called Lazyloading or Read-Through caching strategy.

public Reader GetReader(Guid id)

{

// fetch from Memcached instance

Reader record = this.cache.Get<Reader>(id.ToString());

// record is NULL if not exists in Memcached

if (record == null)

{

// Pull from Repository

record = this.repo.GetReader(id);

// Add to Memcached with the Id as Key

this.cache.Add(record.Id.ToString(), record, cacheSeconds);

}

// return the record

return record;

}

Inside the AddReader() method, when we’re trying to add a new record to the database via the Repository class we push it to the cache immediately once we add it to the database. This way, any consecutive read for the same record will not reach the database and instead be served from the Memcached instance itself. This approach is called as Write-Through caching strategy.

public Reader AddReader(Reader reader)

{

// add record to database

var record = this.repo.AddReader(reader);

// returns a boolean flag for status

if (this.cache.Add(

record.Id.ToString(), record, cacheSeconds))

{

Console.WriteLine("Added Record to Cache");

}

return record;

}

We register this new Decorator which uses Memcached in our service pipeline as below,

services.AddSingleton<IReaderRepo>(

x => ActivatorUtilities.CreateInstance<MemcachedReaderRepo>(x,

ActivatorUtilities.CreateInstance<ReaderRepo>(x)));

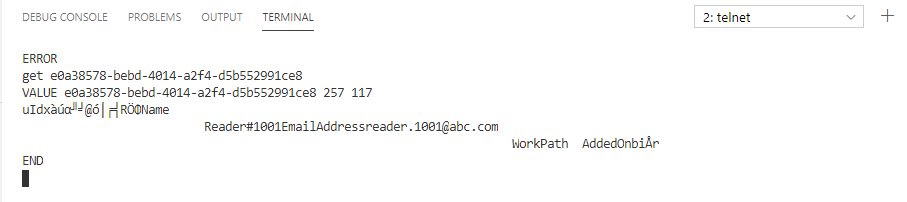

To ensure that the Memcached server is up and running by using telnet as:

> telnet 127.0.0.1 11211

This should open up a blank terminal with a waiting cursor, indicating that the Memcached server is running.

To verify whether the records are being cached perfectly, we run the AspNetCore application and pull a particular record of Reader from the database.

curl 'https://localhost:5001/api/readers/e0a38578-bebd-4014-a2f4-d5b552991ce8'

-H 'authority: localhost:5001'

-H 'cache-control: max-age=0'

--compressed

which returns a single record of the Reader with Id e0a38578-bebd-4014-a2f4-d5b552991ce8.

{

"id":"e0a38578-bebd-4014-a2f4-d5b552991ce8",

"name":"Reader#1001",

"emailAddress":"reader.1001@abc.com",

"workPath":null,

"addedOn":"2020-06-07T19:07:48.386+05:30"

}

Theoritically, this should have already been cached in Memcached since in our implementation of GetReader() we’re caching the record before returning it back. To verify, within our Memcached terminal where we ran the telnet command run the below command. This should show you the same record details that has been returned by the API before.

get e0a38578-bebd-4014-a2f4-d5b552991ce8

Found this article helpful? Please consider supporting!

This way, we can configure and implement Memcached cache in our AspNetCore application. The complete example including the docker-compose file is available at https://github.com/referbruv/memcached-aspnetcore-sample