AWS Lambda Layers were introduced on top of the Lambda deployment stack for cloud applications. They are added to provide support for reusable components and in an attempt to reduce the coldstart issues of the Lambda services. In this article, let’s look in-detail about what the fuss is about these Lambda layers and how we can add Layers to our ASP.NET Core application deployed in an AWS Lambda.

What is a Layer?

What does a Layer mean in an application? It can be assumed as a part of the application stack which encapsulates a set of components which share similar responsibilities. For example, a Data layer encapsulates the data-logic of the application stack and the other components talk to the back-end through the components of this layer. A layer acts independently in the form of a library and is portable and reusable. Similarly, a Lambda Layer refers to an application layer which can be reused across different lambda functions by means of a reference.

Take the example of an ASP.NET Core application published to a server or a folder. Along side the application binary in which the Main() resides and functions, there are several other binaries which represent a variety of packages that are used in the application to achieve a variety of functionalities or to solve a set of problems. And the other layers of the application are also included in the form of their binaries. This generally increases the overall deployment package which is in normal scenarios unavoidable.

When when it comes to deployments in AWS Lambda, there is a cap on the deployment package size and this size also impacts the deployment time and the time taken to boot up the application. When moving to a microservice architecture where each individual service has its own reference to these common layers for their functionalities, this kind of scenarios might give rise to the need for a common place where all these microservices which require a certain library to access can look into and access. This creates an opportunity of code reuse and also reduces the deployment package sizes of individual microservices. In an ideal scenario, we will pack these common libraries which are to be used by the services into a “common place” and then while deploying the services into lambda, it skips all the binaries which are already available in that “common place” and packs only what is not available with the lambda. This is how a Layer works.

How Lambda Layer works?

- Pack all the common libraries which are used by the services and deploy them into a layer

- Specify the layer which the lambda function needs to look upto when it requires that library

- The lambda deploys only the base binary in which the function resides along with binaries which are not available in the layer in to the function.

- The lambda caches all the contents in the layer it is configured to use and reaches out to that cache whenever required.

How can a Lambda Layer help?

- Layers reduce the deployment size of a lambda function package drastically

- They help keep the function lighweight so that it can be maintained easily and deployed quickly

- Layers are reusable – a single layer can be referenced in as many functions which might require the libraries for use

- It also helps addressing the Lambda coldstart issues to some extent

Is Everything Good with Layers?

- Layers are immutable – a layer once deployed can’t be modified, but instead only a newer version can be added.

- If a new version of a layer is added, each lambda function needs to be updated again for the new changes.

- Even if a specific version of the Layer is deleted for some reason, the lambda functions which are using that version still work and so we need to be a bit careful about designing and maintaining these versions.

- Although a layer reduces a deployment package, it still is counted in the overall cap of a lambda function size limit.

- It makes testing the lambda applications a bit tough

Creating Lambda Layers in ASP.NET Core

To create and deploy a dotnet core library as a lambda layer, we make use of runtime package stores. It is a feature of dotnet core which was introduced in dotnetcore2.0 and is available since then. A runtime package store helps create a grouping of the packages which are used in a dotnetcore application which can be externalized and used. It generates an xml file which keeps a list of all the packages that are now grouped. Lambda Layer uses this capability in dotnetcore and goes through this xml to make a note of all the available packages and ignores them when the lambda function is being deployed.

To demonstrate this, let’s create a simple ASP.NET Core API that uses the Serverless Application Model (SAM) for deploying into an AWS Lambda. Before that, make sure that you have the AWS Toolkit for Visual Studio and dotnetcore installed. To install AWS Tools for dotnetcore, run:

> dotnet tool install -g Amazon.Lambda.Tools

#if already installed - update#

> dotnet tool update -g Amazon.Lambda.ToolsThen install the AWS Lambda Templates for dotnetcore via CLI.

> dotnet new -i Amazon.Lambda.TemplatesCreate a new API serverless template project from the list of templates we just installed.

> dotnet new serverless.AspNetCoreWebAPI --name AwsLayers.AppThis creates a new ASP.NET Core API with deployment settings preconfigured. This project can be deployed by means of a file serverless.template which is a JSON file that specifies what to build and how to deploy. For now, lets forget about it and observe the libraries that come with the application.

<Project Sdk="Microsoft.NET.Sdk.Web">

<PropertyGroup>

<TargetFramework>netcoreapp3.1</TargetFramework>

<GenerateRuntimeConfigurationFiles>true</GenerateRuntimeConfigurationFiles>

<AWSProjectType>Lambda</AWSProjectType>

</PropertyGroup>

<ItemGroup>

<PackageReference Include="AWSSDK.S3" Version="3.3.110.62" />

<PackageReference Include="AWSSDK.Extensions.NETCore.Setup" Version="3.3.100.1" />

<PackageReference Include="Amazon.Lambda.AspNetCoreServer" Version="5.1.1" />

</ItemGroup>

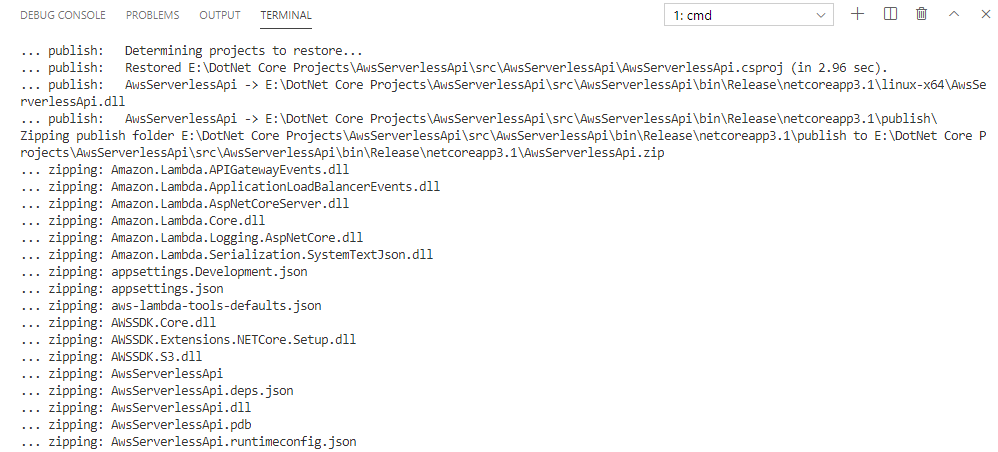

</Project>The libraries this API uses are for setting up the Lambda layer and adding an S3 Client; that this API comes with as a usecase. Let’s try packaging this API and see what happens:

> dotnet lambda packageThis publishes the API along with all the referenced packages and creates a deployment zip. This zip can be uploaded into Lambda console to deploy as a function. The size of this zip is around 610kb, even without a single line of code written. As the API grows and we add more libraries, this size increases which can affect the API deployment and later its performance. With the concept of Layers, we can separate these referenced packages into a runtime store and deploy them seperately into a layer. Then we can configure our API to exclude these packages in the lambda deployment package and instead access these packages from the layer during runtime.

To do this, we need to specify a package store manifest that contains all these packages to be added to the store. This manifest takes the form of a csproj file, and and so let’s add a new csproj file called Dependencies.csproj and move all the package references used in the API project into this csproj file. The file looks like below:

<Project Sdk="Microsoft.NET.Sdk">

<PropertyGroup>

<TargetFramework>netcoreapp3.1</TargetFramework>

<PreserveCompilationContext>true</PreserveCompilationContext>

<GenerateRuntimeConfigurationFiles>true</GenerateRuntimeConfigurationFiles>

<AWSProjectType>Lambda</AWSProjectType>

<OutputType>Library</OutputType>

<StartupObject />

</PropertyGroup>

<ItemGroup>

<PackageReference Include="AWSSDK.S3" Version="3.3.110.62" />

<PackageReference Include="AWSSDK.Extensions.NETCore.Setup" Version="3.3.100.1" />

<PackageReference Include="Amazon.Lambda.AspNetCoreServer" Version="5.1.1" />

</ItemGroup>

</Project>The API project now only has a ProjectReference to this Dependencies.csproj:

<Project Sdk="Microsoft.NET.Sdk.Web">

<PropertyGroup>

<TargetFramework>netcoreapp3.1</TargetFramework>

<GenerateRuntimeConfigurationFiles>true</GenerateRuntimeConfigurationFiles>

<AWSProjectType>Lambda</AWSProjectType>

</PropertyGroup>

<ItemGroup>

<ProjectReference Include="..dependenciesdependencies.csproj" />

</ItemGroup>

</Project>Now we’ll use ‘dotnet store’ command which creates a runtime package store.

> dotnet store --manifest dependencies.csproj --runtime linux-x64 --framework netcoreapp3.1 --framework-version 3.1.0 --output bin/dotnetcore/store --skip-optimizationThis command builds using the csproj file and publishes all the packages specified into the path /dotnetcore/store along with an artifact.xml created under the same folder.

The artifact.xml file contains this:

<StoreArtifacts>

<Package Id="AWSSDK.Core" Version="3.3.106.16" />

<Package Id="AWSSDK.S3" Version="3.3.110.62" />

<Package Id="AWSSDK.Core" Version="3.3.100" />

<Package Id="AWSSDK.Extensions.NETCore.Setup" Version="3.3.100.1" />

<Package Id="Microsoft.Extensions.Configuration.Abstractions" Version="2.0.0" />

<Package Id="Microsoft.Extensions.DependencyInjection.Abstractions" Version="2.0.0" />

<Package Id="Microsoft.Extensions.Logging.Abstractions" Version="2.0.0" />

<Package Id="Microsoft.Extensions.Primitives" Version="2.0.0" />

<Package Id="System.Runtime.CompilerServices.Unsafe" Version="4.4.0" />

<Package Id="Amazon.Lambda.APIGatewayEvents" Version="2.1.0" />

<Package Id="Amazon.Lambda.ApplicationLoadBalancerEvents" Version="2.0.0" />

<Package Id="Amazon.Lambda.AspNetCoreServer" Version="5.1.1" />

<Package Id="Amazon.Lambda.Core" Version="1.1.0" />

<Package Id="Amazon.Lambda.Logging.AspNetCore" Version="3.0.0" />

<Package Id="Amazon.Lambda.Serialization.SystemTextJson" Version="2.0.1" />

<Package Id="Microsoft.Extensions.Configuration" Version="2.1.0" />

<Package Id="Microsoft.Extensions.Configuration.Abstractions" Version="2.1.0" />

<Package Id="Microsoft.Extensions.Configuration.Binder" Version="2.1.0" />

<Package Id="Microsoft.Extensions.DependencyInjection.Abstractions" Version="2.1.0" />

<Package Id="Microsoft.Extensions.Logging" Version="2.1.0" />

<Package Id="Microsoft.Extensions.Logging.Abstractions" Version="2.1.0" />

<Package Id="Microsoft.Extensions.Options" Version="2.1.0" />

<Package Id="Microsoft.Extensions.Primitives" Version="2.1.0" />

<Package Id="System.Runtime.CompilerServices.Unsafe" Version="4.5.0" />

</StoreArtifacts>It lists down all the package references inside the dependencies.csproj, along with libraries these packages depend internally – so that one need not go look for any other packages other than these during runtime.

Zip the dotnetcore folder into package.zip file and also copy the artifact.xml in the same path as the package.zip file. Upload these two files into an S3 bucket, into a folder say /dependenciesLayer which we shall use as we move forward.

In Lambda section under AWS Console, click on Layers under Additional Resources section and click on Create Layer.

In the fields, give these values:

- Name: dependenciesLayer

- Description: {“Nlt”:1,”Dir”:”dotnetcore/store”,”Op”:0,”Buc”:”s3_bucket_where_packages_are_uploaded”,”Key”:”dependenciesLayer/artifact.xml”}

- Compatible Runtimes: dotnetcore3.1

select the Upload from S3 option and give the path of the package.zip we’ve uploaded before and finally click Create. This completes the Layer creation. Copy the Layer Arn value generated, which we shall use in our API project.

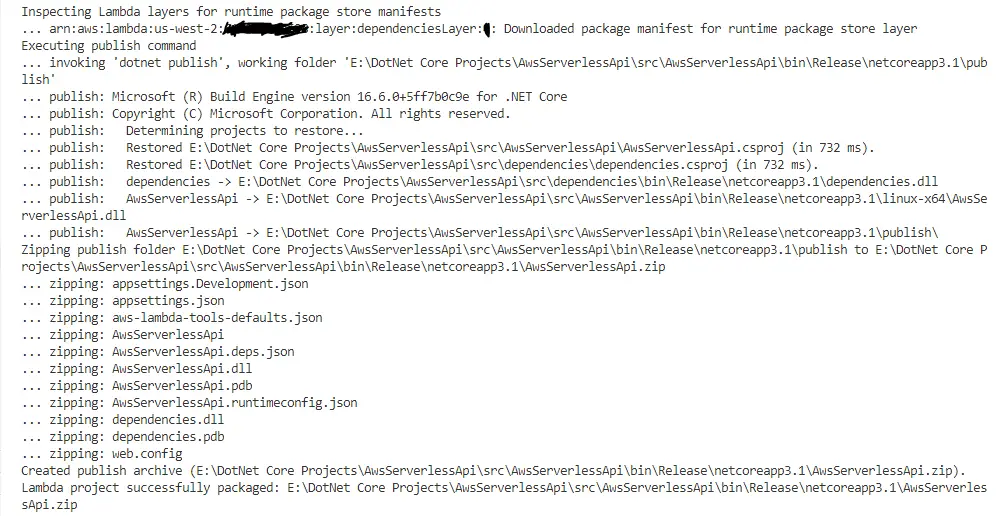

Back in the API project, let’s run the lambda deploy command again and this time pass another parameter with the layerArn we just copied before.

> dotnet lambda package --function-layers arn:aws:lambda:us-west-2:123456789:layer:dependenciesLayer:1Observe the log, which now excludes all the packages which were present in the Dependencies.csproj and instead just adds two binaries in the deployment zip – the API binary and the Dependencies binary. Also, notice the size of the zip file – its now just 61 KB!

From 610kb without Layer to just 61 KB with a Layer attached!! Now this Layer can be reused across all the lambda functions we deploy in the future and they can make use of the Core Lambda packages for Dotnetcore without having to include them in the project. Pretty awesome right?

In this way, we can make use of Layers to help reduce our deployment sizes and reuse all such packages across other lambda functions.

In the next article, we shall see how we can adapt this in packing our own custom dotnetcore library and create a layer and use it in this project.